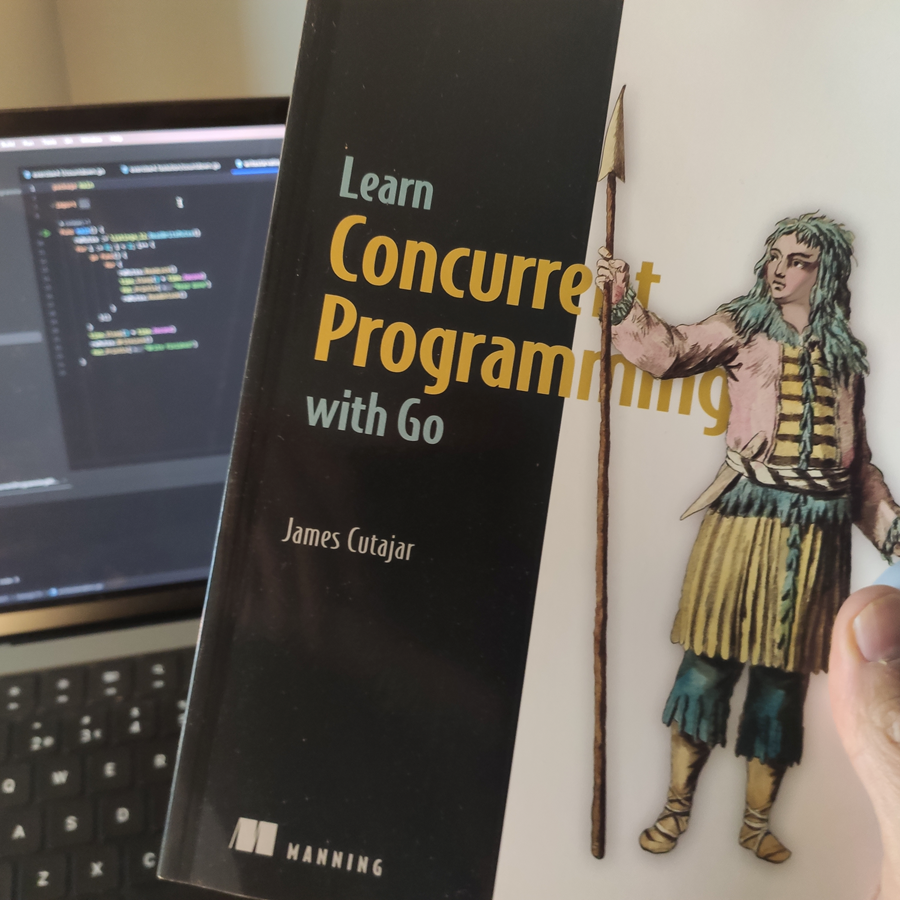

Disclaimer: The following article content and excerpts are derived from Manning Publications' article titled "Modern Concurrency with Go”. While we have restructured and integrated portions of their original content, both text and images, into our own discussion, we want to acknowledge the original author's contributions. We have taken care to provide proper attribution and references to the original source. The primary focus of this Blip’s article repost is to highlight the expertise and contributions of James Cutajar, our Principal Software Engineer and Learn Concurrent Programming with Go book author, in our context and to provide insights on the topic that are relevant to our audience. We encourage readers to explore the original article and the published book for a deeper understanding of the subject matter.

Introduction

In today's tech landscape, efficient and scalable solutions are crucial for modern applications. Concurrent programming offers a pathway to improved performance and scalability. In this article, we'll explore the first chapter of the book Learn Concurrent Programming with Go. Come along as we explore James’ point of view of concurrent execution, scaling programs, and why Go is the language of choice for concurrency.

About the Author and the Book

James Cutajar is dedicated to backend development at Blip, part of the Flutter group, and has recently published a book that provides a practical, hands-on introduction to creating software for modern multiprocessor systems. In this book, the author guides the reader on how to divide larger programming tasks into independent parts that can run simultaneously, on how to use the Go language to implement common concurrency patterns by utilising readers-writer locks, semaphores, message passing, and memory sharing.

Learn Concurrent Programming with Go explores the principles and real-world applicability of concurrent programming with Go. Unlike sequential programming, concurrent programming leverages multiple CPU cores, boosting program execution speed and overall efficiency.

“Learn Concurrent Programming with Go” Deep Dive

Interacting with a concurrent world

We live and work in a concurrent world. The software that we write models complex business processes that interact together concurrently. Even the simplest of businesses typically have many of these concurrent interactions. For example, we can think of multiple people ordering online at the same time or a consolidation process grouping packages together while simultaneously coordinating with ongoing shipments.

Concurrent programming is about writing instructions so that multiple tasks and processes can execute and interact at the same time.

Increasing throughput

For the modern developer it is ever more important to understand how to program concurrently. This is because the hardware landscape has changed over the years to benefit this type of programming.

Prior to multicore technology, processors’ performance increased proportionally to clock frequency and transistor count, roughly doubling every 2 years. Processor engineers started hitting physical limits due to overheating and power consumption, which coincided with the explosion of more mobile hardware such as notebooks and smartphones. To reduce excessive battery consumption and CPU overheating while increasing processing power, the engineers introduced multicore processors.

In addition, with the rise of cloud computing services, developers have easy access to large, cheap processing resources to run their code. All this extra computational power can only be harnessed effectively if our code is written in a manner that takes full advantage of the extra processing units.

Having multiple processing resources means we can scale horizontally. We can use the extra processors to compute executions in parallel and finish our tasks quicker. This is only possible if we write code in a way that takes full advantage of the extra processing resources.

What about a system that has only one processor? Is there any advantage in writing concurrent code when our system does not have multiple processors? It turns out that writing concurrent programs is a benefit even in this scenario.

Most programs spend only a small proportion of their time executing computations on the processor. Think for example about a word processor that waits for input from the keyboard. Or a text files search utility spending most of its running time waiting for portions of the text files to load in memory. We can have our program perform a different task while it’s waiting for input/output. For example, the word processor can perform a spell check on the document while the user is thinking about what to type next. We can have the file search utility looking for a match with the file that we have already loaded in memory while we are waiting to finish reading the next file into another portion of memory.

Think for example when we’re cooking or baking our favorite dish. We can make more effective use of our time if, while the dish is in the oven or stove, we perform some other actions instead of idling and just waiting around. In this way we are making more effective use of our time and we are more productive. This is analogous to our system executing other instructions on the CPU while concurrently the same program is waiting for a network message, user input, or a file writing to complete. This means that our program can get more work done in the same amount of time.

Improving responsiveness

Concurrent programming makes our software more responsive because we don’t need to wait for one task to finish before responding to a user’s input. Even if we have one processor, we can always pause the execution of a set of instructions, respond to the user’s input, and then continue with the execution while we’re waiting for the next user’s input.

If again we think of a word processor, multiple tasks might be running in the background while we are typing. There is a task that listens to keyboard events and displays each character on the screen. We might have another task that is checking our spelling and grammar in the background. Another task might be running to give us stats on our document (word count, pages, and so on). All these tasks running together give the impression that they are somehow running simultaneously. What’s happening is that these various tasks are being fast-switched by the operating system on CPUs.

Programming concurrency in Go

Go is an ideal language to use to learn about concurrent programming because its creators designed it with high-performance concurrency in mind. The aim was to produce a language that was efficient at runtime, readable, and easy to use. This means that Go has many tools for concurrent programming. Let’s take a look at some of the advantages to using Go for concurrent programs.

Goroutines at a glance

Go uses a lightweight construct, called a goroutine, to model the basic unit of concurrent execution. As we shall see in the next chapter, goroutines give us a hybrid system between operating system and user-level threads, giving us some of the advantages of both systems.

Given the lightweight nature of goroutines, the premise of the language is that we should focus mainly on writing correct concurrent programs, letting Go’s runtime and hardware mechanics deal with parallelism. The principle is that if you need something to be done concurrently, create a goroutine to do it. If you need many things done concurrently, create as many goroutines as you need, without worrying about resource allocation. Then depending on the hardware and environment that your program is running on, your solution will scale.

In addition to goroutines, Go gives provides us with many abstractions that allow us to coordinate the concurrent executions together on a common task. One of these abstractions is known as a channel. Channels allow two or more goroutines to pass messages to each other. This enables the exchange of information and synchronization of the multiple executions in an easy and intuitive manner.

Modelling concurrency with CSP and primitives

The other advantage of using Go for concurrent programming is its support for Communicating Sequential Processes (CSP). This is a manner of modelling concurrent programs in order to reduce the risk of certain types of programming errors. CSP is more akin to how concurrency happens in everyday life. This is when we have isolated executions (processes, threads, or goroutines) working concurrently, communicating to each other by sending messages back and forth.

The Go language includes support for CSP natively. This has made the technique very popular. CSP makes our concurrent programming easier and reduces certain types of errors.

Sometimes the classic concurrency primitives found in many other languages used with memory sharing will do a much better job and result in a better performance than using CSP. These primitives include tools such as mutexes and conditional variables. Luckily for us, Go provides us with these tools as well. When CSP is not the appropriate model to use, we can use the other classic primitives also provided in the language.

It’s best to start with memory sharing and synchronization. The idea is that by the time you get to CSP, you will have a solid foundation in the traditional locking and synchronization primitives.

Scaling performance

Performance scalability is the measure of how well our program speeds up in proportion to the increase in the number of resources available to the program. To understand this, let’s try to make use of a simple analogy.

Imagine a world where we are property developers. Our current active project is to build a small multi story residential house. We give your architectural plan to a builder, and she sets off to finish the small house. The works are all completed in a period of 8 months.

As soon as we finish, we get another request for the same exact build but in another location. To speed things up, we hire two builders instead of one. This second time around, the builders complete the house in just 4 months.

The next time that we get another project to build the same exact house, we agree to hire even more help so that the house is finished quicker. This time we pay 4 builders, and it takes them 2 and a half months to complete. The house has cost us a bit more to build than the previous one. Paying 4 builders for 2.5 months costs you more than paying 2 builders for 4 months (assuming they all charge the same).

Again, we repeat the experiment twice more, once with 8 builders and another time with 16. With both 8 and 16 builders, the house took 2 months to complete. It seems that no matter how many hands we put on the job, the build cannot be completed faster than 2 months. In geek speak, we say that we have hit our scalability limit.

Amdahl’s Law

Amdahl’s Law states that the overall performance improvement gained by optimizing a single part of a system is limited by the fraction of time that the improved part is actually used.

Amdahl’s law tells us that the non-parallel parts of an execution act as a bottleneck and limit the advantage of parallelizing the execution. The image below shows this relationship between the theoretical speedup obtained as we increase the number of processors.

Gustafson’s Law

In 1988 two computer scientists, John L. Gustafson and Edwin H. Barsis, reevaluated Amdahl’s law and published an article addressing some of its shortcomings. It gives an alternative perspective on the limits of parallelism. Their main argument is that, in practice, the size of the problem changes when we have access to more resources.

If we were developing some software and we had a large number of computing resources, if we noticed that utilizing half the resources resulted in the same performance of that software, we could allocate those extra resources to do other things, such as increasing the accuracy or quality of that software in other areas.

The second point against Amdahl’s law is that when you increase the problem size, the non-parallel part of the problem typically does not grow in proportion with problem size. In fact, Gustafson argues that for many problems this remains constant. Thus, when you take these two points into account, the speedup can scale linearly with the available parallel resources.

Gustafson’s Law tells us that as long as we find ways to keep our extra resources busy, the speedup should continue to increase and not be limited by the serial part of the problem. This is only if the serial part stays constant as we increase the problem size, which according to Gustafson, is the case in many types of programs.

Summary

- Concurrent programming allows us to build more responsive software.

- Concurrent programs can also provide increased speedup when running on multiple processors.

- We can also increase throughput even when we have one processor if our concurrent programming makes effective use of the input/output wait times.

- Go provides us with goroutines which are lightweight constructs to model concurrent executions.

- Go provides us with abstractions, such as channels, that enable concurrent executions to communicate and synchronize.

- Go allows us the choice of building our concurrent application either using concurrent sequential processes (CSP) model or alternatively using the classical primitives.

- Using a CSP model, we reduce the chance of certain types of concurrent errors; however, certain problems can run more efficiently if we use the classical primitives.

- Amdahl’s Law tells us that the performance scalability of a fixed-size problem is limited by the non-parallel parts of an execution.

- Gustafson’s Law tells us that if we keep on finding ways to keep our extra resources busy, the speedup should continue to increase and not be limited by the serial part of the problem.

Learn more about Concurrent Programming with Go here.